Synchronizing block devices

Project | Article by Maarten Tromp | Published | 1010 words.

Introduction

Blocksync does for block devices what rsync does for files. Like rsync it only transfers changes, saving bandwidth, write operations and time, but like dd it works on both files and block devices alike.

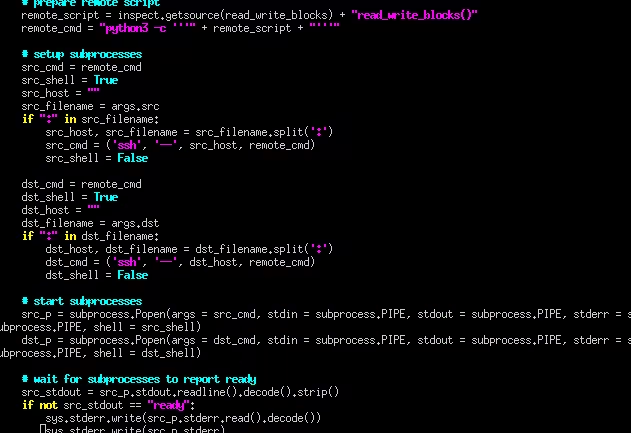

Blocksync code screenshot

Blocksync code screenshot

In this article:

In this article:

At work we ran into a problem with daily copying large binary backup images to an off-site backup server. The images were multiple terabytes each, with only small changes from day to day. Typically you would use rsync to only transfer changes but source and destination were block devices, so file based tools wouldn't work. The best we could do was dd over ssh to copy the entire image on a daily basis. This is not particularly efficient and takes a quite lot of time, but it got the job done. Over time the dataset grew and one day copying the images took over 24 hours to complete. So we decided to look for a better solution.

Searching the internet I found several possible solutions, all with their advantages and disadvantages. For us the most promising option seemed to be blocksync. It's a Python script that can synchronize with a remote machine, uses delta transfer and, most importantly, works on block devices. Researching a bit further I found at least 7 more similar scripts on Github alone, mostly also named blocksync. Each version had some specific additions or changes, but they were mostly the same. Some had features I'd like to use, but there didn't seem to be a single version that ticked all the boxes.

Earlier I had dismissed the idea to write our own synchronization tool on the basis that there must be something out there already that works. There is probably a tool like rsync, that has been developed over decades and is already better suited and better tested than anything we could make ourselves. Besides, writing our own seemed like a rabbit hole.

But there was no established block sync tool, at least not that I could find. And reading the Python scripts, they didn't seem nearly as complex as I had feared. So instead of spending more time searching I decided to spend my time hacking one of the Python scripts. For a moment I toyed with the idea of merging all those different versions into an ultimate blocksync, one that covers all use cases, but then remembered that I have an actual problem to solve and the clock is ticking. I had never used Python before, but the language seemed easy enough to pick up.

After reading all different versions of blocksync, I decided to start from scratch. It seemed easier to re-implement the bits I needed, learning as I go, than starting out with multiple complicated versions. My aim was to make a clean, minimal implementation that is easy to audit. To keep inline with the other versions, I named it blocksync.

While blocksync is written to address a specific issue, it's a generic tool that can be used for many things, as is the Unix philosophy.

Blocksync consists of 3 separate processes; a worker process on the source host, a worker process on the destination host and a control process. When blocksync is started, the control process spawns the two worker subprocess, one on the source host and one on the destination host. The worker processes are also Python, but with the worker function given as command-line parameter. Remote processes are spawned over ssh, but work the same as local ones. By starting the subprocesses this way there is no need to have blocksync present on remote hosts, only Python. All communication between worker processes and control process uses pipes, so the control process does not have to differentiate between local and remote subprocesses and there is no need to open up additional ports in the firewall.

To find changes between source and destination block devices, both worker processes read their first chunk, generate a checksum, and send it to the control process. The control process compares checksums and if they differ, the entire source chunk is passed to the destination worker which overwrites the old chunk. If checksums match, both worker processes move on to their next chunk and repeat the steps until all chunks are finished.

Blocksync key features are:

Source and destination can both be either local or remote. Like rsync, you can either push or pull, whichever you prefer. Blocksync can even be started from a third machine.

Blocksync works on both block devices and files. Like dd, source and destination can be of different types.

The only dependency is Python3. There is no need to have blocksync present on remote machines.

Only changed chunks are transferred. Saving bandwidth, write operations, and therefore time.

Blocksync uses ssh for communication with remote hosts. This makes all data transfer secure, authenticated, and there is no need to open any additional ports.

Source and destination size have to be an exact multiple of the chunk size. With 1 MB chunk size this is usually not a problem. The issue can be fixed, of course, but at the cost of added complexity without much benefit.

While blocksync is more efficient than copying an entire device, still the entire source and destination devices will be read to look for changes. Without knowing beforehand where the changes are (i.e. metadata), there is no way around this.

At work we use open source where possible and try to contribute as well. So blocksync is developed using free and open source software and in turn it's also released as open source.

The code and this article are released into the public domain. You can find all files in the downloads directory of this article.

At the time or writing blocksync has been in use in a production environment for over a year, synchronizing tens of terabytes of data per day. It has never failed us or caused any data corruption that we noticed.

Thanks to the authors of all blocksync versions I've seen. They showed me what can be done, how to do it, and introduced me to the wonderful world of Python.